- Why: Existing affordance prediction focus on pinhole cameras with narrow FoV and fragmented context. Panoramic cameras, which capture global spatial relationships and holistic scene understanding, offer a natural solution to this bottleneck.

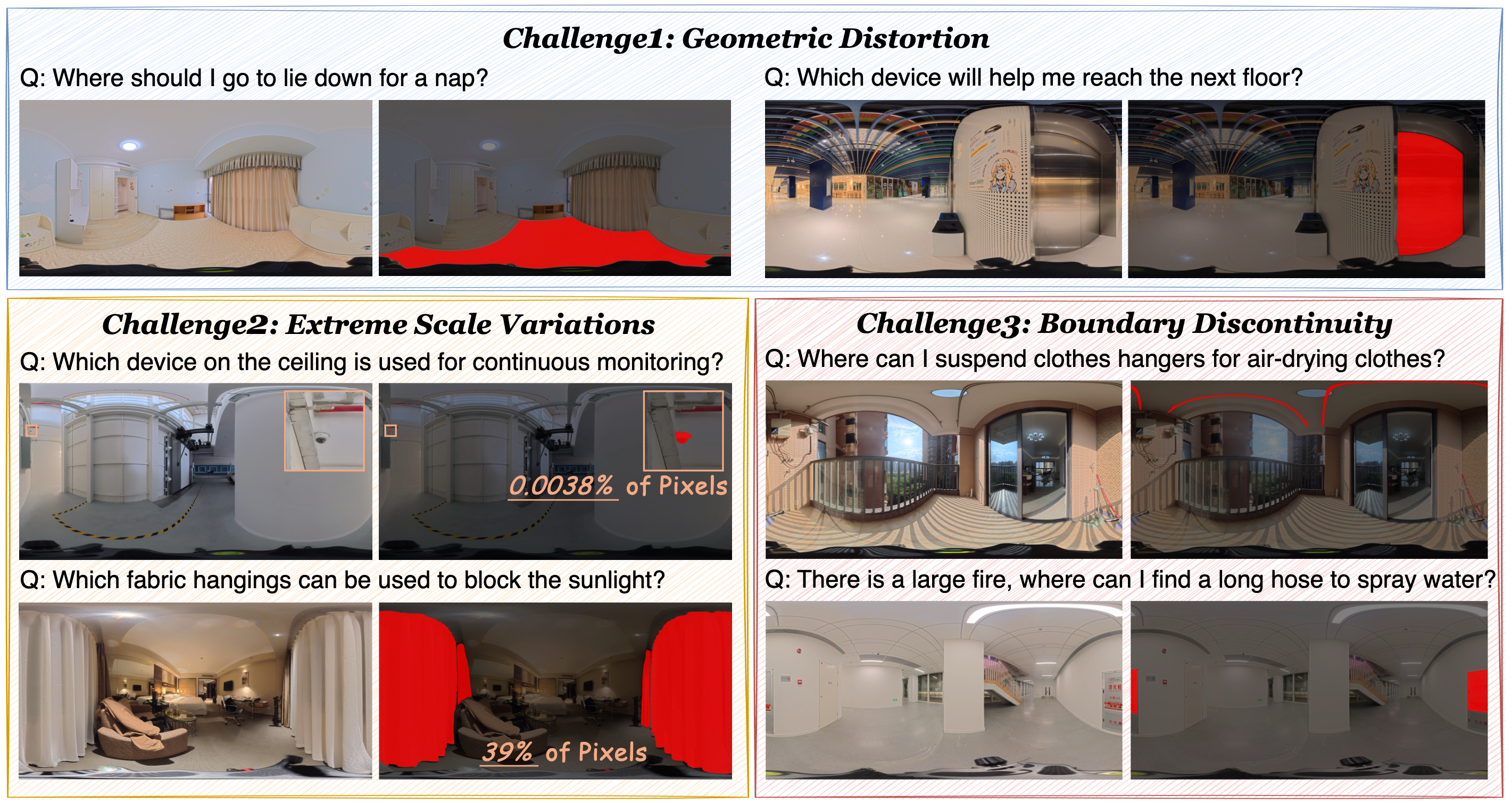

- What: We built PAP-12K, a real-world 12K panoramic benchmark dataset with dense affordance task annotations and panoramic-specific challenges.

- How: Our pipeline PAP uses Recursive Visual Routing + Adaptive Gaze + Cascaded Grounding to deal with panoramic challenges and achieves robust zero-shot performance.

PAP-12K Dataset

Dataset Preview

Preview one sample scene per scene type from PAP-12K. You can click object buttons to inspect their segmentation masks and affordance questions.

PAP Framework

PAP is a training-free coarse-to-fine pipeline inspired by human foveal vision.

1) Recursive Visual Routing

Prompt-guided zoom-in search localizes candidate actionable regions efficiently in ultra-high-res panoramas.

2) Adaptive Gaze

Local spherical regions are reprojected to perspective views to remove ERP distortion and boundary artifacts.

3) Cascaded Grounding

Open-vocabulary detection + segmentation extract precise instance-level affordance masks on rectified patches.

Key Results

Quantitative Snapshot

Qualitative Results

Qualitative visualization of our method. PAP demonstrates superior reasoning and grounding ability in 360° panoramas, effectively interpreting complex queries to accurately localize actionable regions despite geometric distortions and massive background clutter.

BibTeX

@article{zhang2026pap,

title={Panoramic Affordance Prediction},

author={Zhang, Zixin and Liao, Chenfei and Zhang, Hongfei and Chen, Harold Haodong and Chen, Kanghao and Wen, Zichen and Guo, Litao and Ren, Bin and Zheng, Xu and Li, Yinchuan and Hu, Xuming and Sebe, Nicu and Chen, Ying-Cong},

journal={arXiv preprint arXiv:2603.15558},

year={2026}

}